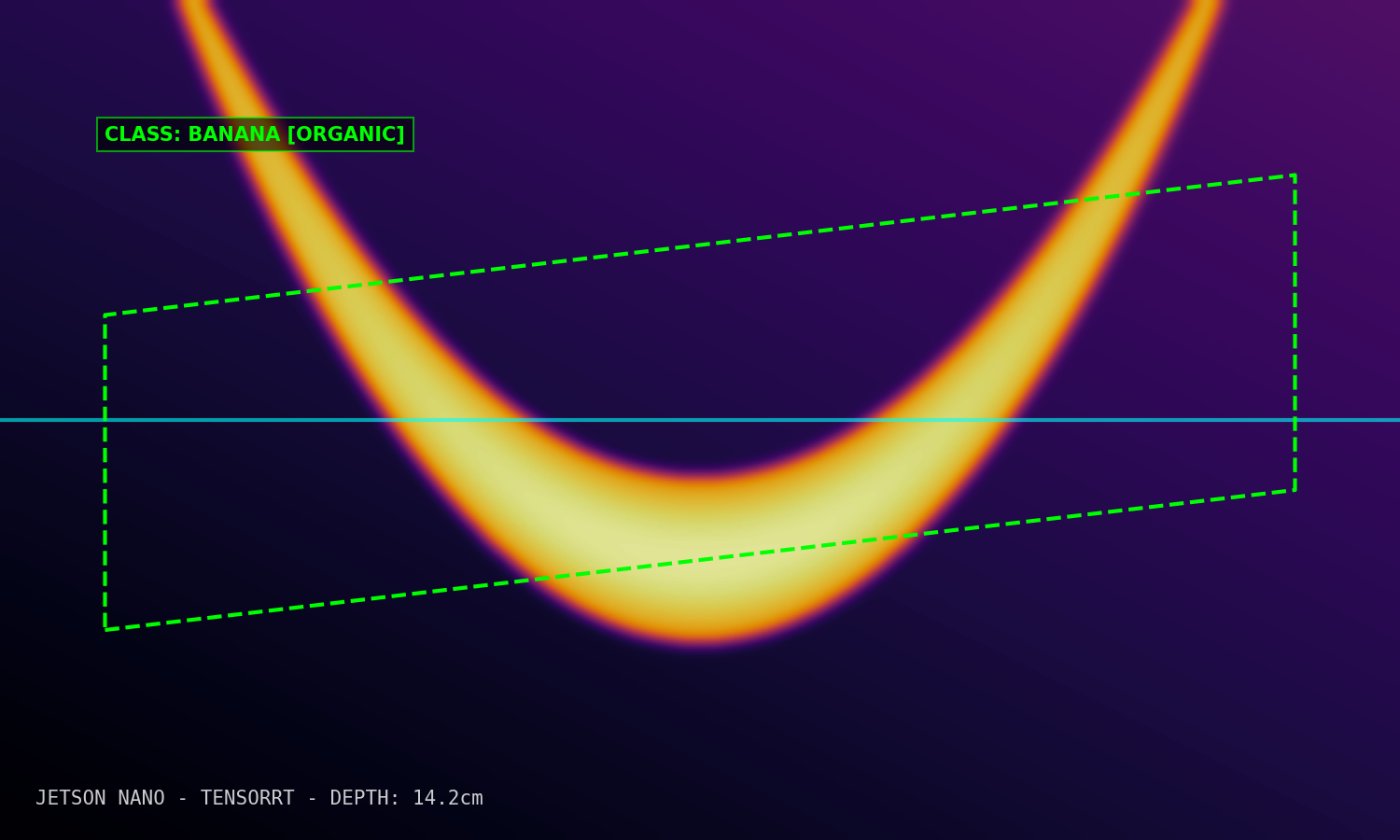

Depth Estimation (Edge AI)

Real-time monocular depth estimation deployed on NVIDIA Jetson Nano edge hardware measuring continuous spatial topography.

Monocular Environment Mapping

LiDAR arrays are expensive and power-hungry. This project successfully replaced physical LiDAR hardware with a singular RGB camera and an optimized neural network running on edge constraint logic.

Edge Native

Fully optimized to run on a 5W power budget via Jetson Nano.

Zero-LiDAR

Calculates depth gradients using only mathematical RGB displacement.

TensorRT Graph

FP16 inference providing drastic performance boosts.

Technical Strategy

Deploying state-of-the-art vision transformer models to edge IoT devices requires deep architectural quantization.

MiDaS Pruning

Took the standard MiDaS depth model and pruned layers with low-attention weights to reduce model size by 65%.

INT8 Quantization

Utilized NVIDIA TensorRT to quantize the payload to INT8 architectures ensuring maximal throughput on the Jetson GPU.

C++ Inference API

Bypassed Python entirely in production, wrapping the TRT engine in a C++ API for zero-copy memory transfers over V4L2.